Breaking News

Doctors' AI Systems Are Hallucinating Nonexistent Medical Issues During Appointments...

Doctors' AI Systems Are Hallucinating Nonexistent Medical Issues During Appointments...

Finland's Sand Battery Delivers Cheaper Heat with 70% Lower Emissions

Finland's Sand Battery Delivers Cheaper Heat with 70% Lower Emissions

Trump's Peace Plan: Middle East Unites Against Iran #shorts

Trump's Peace Plan: Middle East Unites Against Iran #shorts

Investors have never used this much leverage:

Investors have never used this much leverage:

Top Tech News

Sodium Ion Batteries Can Reach 100 Gigawatt Per Hour Per Year Scale in 2027

Sodium Ion Batteries Can Reach 100 Gigawatt Per Hour Per Year Scale in 2027

Juiced Bikes proves capable electric motorcycles don't have to cost a lot

Juiced Bikes proves capable electric motorcycles don't have to cost a lot

Headlight projectors turn your car into a drive-in theater

Headlight projectors turn your car into a drive-in theater

US To Develop Small Modular Nuclear Reactors For Commercial Shipping

US To Develop Small Modular Nuclear Reactors For Commercial Shipping

New York Mandates Kill Switch and Surveillance Software in Your 3D Printer ...

New York Mandates Kill Switch and Surveillance Software in Your 3D Printer ...

Cameco Sees As Many As 20 AP1000 Nuclear Reactors On The Horizon

Cameco Sees As Many As 20 AP1000 Nuclear Reactors On The Horizon

His grandparents had heart disease.

At 11, Laurent Simons decided he wanted to fight aging.

His grandparents had heart disease.

At 11, Laurent Simons decided he wanted to fight aging.

Mayo Clinic's AI Can Detect Pancreatic Cancer up to 3 Years Before Diagnosis–When Treatment...

Mayo Clinic's AI Can Detect Pancreatic Cancer up to 3 Years Before Diagnosis–When Treatment...

A multi-terrain robot from China is going viral, not because of raw speed or power...

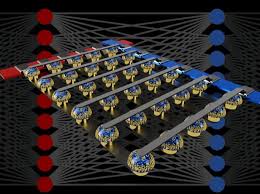

IBM Investing $2 Billion in an AI Center and Targets 1000 Times Improvement by 2029

IBM is partnering with State University of New York to develop an AI Hardware Center at SUNY Polytechnic Institute in Albany. New York will also provide a subsidy of $300 million.

The IBM Research AI Hardware Center will enable IBM and their partner ecosystem to achieve 1,000x AI performance efficiency improvement over the next decade. They will overcome current machine-learning limitations by using approximate computing with Digital AI Cores and in-memory computing with Analog AI Cores.

Approximate Computing with Digital AI Cores

The best hardware platforms for training deep neural networks (DNNs) has just moved from traditional single precision (32-bit) computations towards 16-bit precision. This is more energy efficient and uses less memory. IBM researchers have successfully trained DNNs using 8-bit floating point numbers (FP8) while fully maintaining the accuracy of deep learning models and datasets.

The World's Biggest Fusion Reactor Just Hit A Milestone

The World's Biggest Fusion Reactor Just Hit A Milestone