Breaking News

BREAKING: 20-30 Gunshots Heard Near White House - Pool Reporters Run Inside Press Briefing Room

BREAKING: 20-30 Gunshots Heard Near White House - Pool Reporters Run Inside Press Briefing Room

EXCLUSIVE VIDEO: Inside the Successful Operation to Rescue Dogs From Hideous Experimentations

EXCLUSIVE VIDEO: Inside the Successful Operation to Rescue Dogs From Hideous Experimentations

U.S. and Iran are expected to announce the finalization of a draft proposal of a peace deal...

U.S. and Iran are expected to announce the finalization of a draft proposal of a peace deal...

Should You Water Your Garden Every Day? (Most Gardeners Get This Wrong)

Should You Water Your Garden Every Day? (Most Gardeners Get This Wrong)

Top Tech News

Cars Are Fast Becoming Dystopian Prison Pods...

Cars Are Fast Becoming Dystopian Prison Pods...

Our Emergency Water Plan Wasn't Good Enough - So We Built This

Our Emergency Water Plan Wasn't Good Enough - So We Built This

Sodium Ion Batteries Can Reach 100 Gigawatt Per Hour Per Year Scale in 2027

Sodium Ion Batteries Can Reach 100 Gigawatt Per Hour Per Year Scale in 2027

Juiced Bikes proves capable electric motorcycles don't have to cost a lot

Juiced Bikes proves capable electric motorcycles don't have to cost a lot

Headlight projectors turn your car into a drive-in theater

Headlight projectors turn your car into a drive-in theater

US To Develop Small Modular Nuclear Reactors For Commercial Shipping

US To Develop Small Modular Nuclear Reactors For Commercial Shipping

New York Mandates Kill Switch and Surveillance Software in Your 3D Printer ...

New York Mandates Kill Switch and Surveillance Software in Your 3D Printer ...

Cameco Sees As Many As 20 AP1000 Nuclear Reactors On The Horizon

Cameco Sees As Many As 20 AP1000 Nuclear Reactors On The Horizon

His grandparents had heart disease.

At 11, Laurent Simons decided he wanted to fight aging.

His grandparents had heart disease.

At 11, Laurent Simons decided he wanted to fight aging.

Mayo Clinic's AI Can Detect Pancreatic Cancer up to 3 Years Before Diagnosis–When Treatment...

Mayo Clinic's AI Can Detect Pancreatic Cancer up to 3 Years Before Diagnosis–When Treatment...

AI voice cloning from a few seconds of voice sampling is real and rapidly improving

There are examples of speech sample recordings and synthesized speech based on different numbers of samples. The synthesized speech had some noise distortion but the samples did sound like the original speakers.

Baidu attempted to learn speaker characteristics from only a few utterances (i.e., sentences of few seconds duration). This problem is commonly known as "voice cloning." Voice cloning is expected to have significant applications in the direction of personalization in human-machine interfaces.

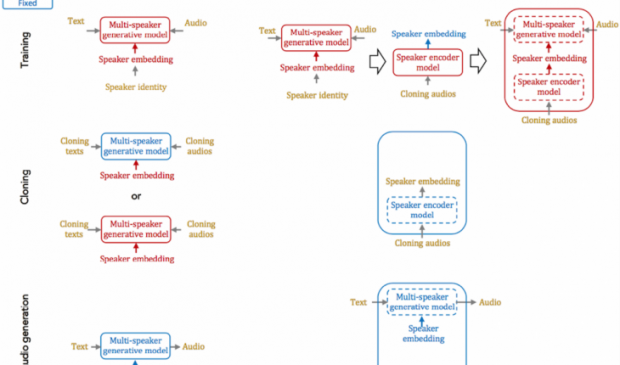

They tried two fundamental approaches for solving the problems with voice cloning: speaker adaptation and speaker encoding.

Speaker adaptation is based on fine-tuning a multi-speaker generative model with a few cloning samples, by using backpropagation-based optimization. Adaptation can be applied to the whole model, or only the low-dimensional speaker embeddings. The latter enables a much lower number of parameters to represent each speaker, albeit it yields a longer cloning time and lower audio quality.