Breaking News

BREAKING: 20-30 Gunshots Heard Near White House - Pool Reporters Run Inside Press Briefing Room

BREAKING: 20-30 Gunshots Heard Near White House - Pool Reporters Run Inside Press Briefing Room

EXCLUSIVE VIDEO: Inside the Successful Operation to Rescue Dogs From Hideous Experimentations

EXCLUSIVE VIDEO: Inside the Successful Operation to Rescue Dogs From Hideous Experimentations

U.S. and Iran are expected to announce the finalization of a draft proposal of a peace deal...

U.S. and Iran are expected to announce the finalization of a draft proposal of a peace deal...

Should You Water Your Garden Every Day? (Most Gardeners Get This Wrong)

Should You Water Your Garden Every Day? (Most Gardeners Get This Wrong)

Top Tech News

Cars Are Fast Becoming Dystopian Prison Pods...

Cars Are Fast Becoming Dystopian Prison Pods...

Our Emergency Water Plan Wasn't Good Enough - So We Built This

Our Emergency Water Plan Wasn't Good Enough - So We Built This

Sodium Ion Batteries Can Reach 100 Gigawatt Per Hour Per Year Scale in 2027

Sodium Ion Batteries Can Reach 100 Gigawatt Per Hour Per Year Scale in 2027

Juiced Bikes proves capable electric motorcycles don't have to cost a lot

Juiced Bikes proves capable electric motorcycles don't have to cost a lot

Headlight projectors turn your car into a drive-in theater

Headlight projectors turn your car into a drive-in theater

US To Develop Small Modular Nuclear Reactors For Commercial Shipping

US To Develop Small Modular Nuclear Reactors For Commercial Shipping

New York Mandates Kill Switch and Surveillance Software in Your 3D Printer ...

New York Mandates Kill Switch and Surveillance Software in Your 3D Printer ...

Cameco Sees As Many As 20 AP1000 Nuclear Reactors On The Horizon

Cameco Sees As Many As 20 AP1000 Nuclear Reactors On The Horizon

His grandparents had heart disease.

At 11, Laurent Simons decided he wanted to fight aging.

His grandparents had heart disease.

At 11, Laurent Simons decided he wanted to fight aging.

Mayo Clinic's AI Can Detect Pancreatic Cancer up to 3 Years Before Diagnosis–When Treatment...

Mayo Clinic's AI Can Detect Pancreatic Cancer up to 3 Years Before Diagnosis–When Treatment...

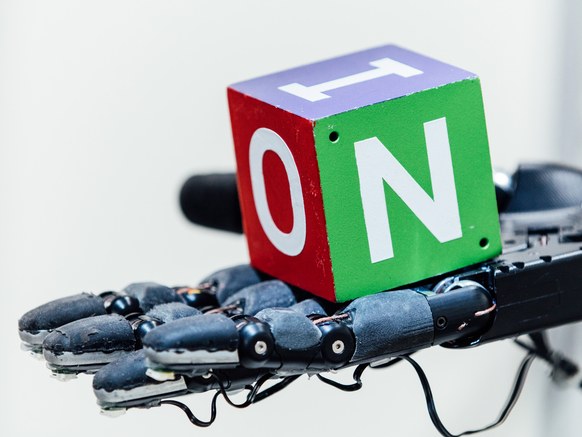

This Robot Hand Taught Itself How to Grab Stuff Like a Human

So he helped found a research nonprofit, OpenAI, to help cut a path to "safe" artificial general intelligence, as opposed to machines that pop our civilization like a pimple. Yes, Musk's very public fears may distract from other more real problems in AI. But OpenAI just took a big step toward robots that better integrate into our world by not, well, breaking everything they pick up.

OpenAI researchers have built a system in which a simulated robotic hand learns to manipulate a block through trial and error, then seamlessly transfers that knowledge to a robotic hand in the real world. Incredibly, the system ends up "inventing" characteristic grasps that humans already commonly use to handle objects. Not in a quest to pop us like pimples—to be clear.

The researchers' trick is a technique called reinforcement learning. In a simulation, a hand, powered by a neural network, is free to experiment with different ways to grasp and fiddle with a block. "It's just doing random things and failing miserably all the time," says OpenAI engineer Matthias Plappert. "Then what we do is we give it a reward whenever it does something that slightly moves it toward the goal it actually wants to achieve, which is rotating the block."