Breaking News

Pentagon Drafted Plans for Ground Operation to Capture Iranian Uranium at Trump's Request

Pentagon Drafted Plans for Ground Operation to Capture Iranian Uranium at Trump's Request

"Break The Door If You Have A Warrant" – Son Defends 102-Year-Old Father From...

"Break The Door If You Have A Warrant" – Son Defends 102-Year-Old Father From...

The FAA's "Temporary" Flight Restriction for Drones is a Blatant Attempt to Criminaliz

The FAA's "Temporary" Flight Restriction for Drones is a Blatant Attempt to Criminaliz

THE TRAP IS SPRUNG: Why April 6th Was a Massive Lie and the Global Energy Grid is Already Dead

THE TRAP IS SPRUNG: Why April 6th Was a Massive Lie and the Global Energy Grid is Already Dead

Top Tech News

DARPA O-Circuit program wants drones that can smell danger...

DARPA O-Circuit program wants drones that can smell danger...

Practical Smell-O-Vision could soon be coming to a VR headset near you

Practical Smell-O-Vision could soon be coming to a VR headset near you

ICYMI - RAI introduces its new prototype "Roadrunner," a 33 lb bipedal wheeled robot.

ICYMI - RAI introduces its new prototype "Roadrunner," a 33 lb bipedal wheeled robot.

Pulsar Fusion Ignites Plasma in Nuclear Rocket Test

Pulsar Fusion Ignites Plasma in Nuclear Rocket Test

Details of the NASA Moonbase Plans Include a Fifteen Ton Lunar Rover

Details of the NASA Moonbase Plans Include a Fifteen Ton Lunar Rover

THIS is the Biggest Thing Since CGI

THIS is the Biggest Thing Since CGI

BACK TO THE MOON: Crewed Lunar Mission Artemis II Confirmed for Wednesday...

BACK TO THE MOON: Crewed Lunar Mission Artemis II Confirmed for Wednesday...

The Secret Spy Tech Inside Every Credit Card

The Secret Spy Tech Inside Every Credit Card

Red light therapy boosts retinal health in early macular degeneration

Red light therapy boosts retinal health in early macular degeneration

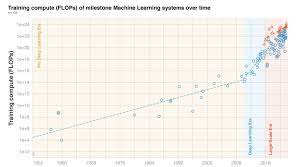

Three Eras of Machine Learning and Predicting the Future of AI

They show :

before 2010 training compute grew in line with Moore's law, doubling roughly every 20 months.

Deep Learning started in the early 2010s and the scaling of training compute has accelerated, doubling approximately every 6 months.

In late 2015, a new trend emerged as firms developed large-scale ML models with 10 to 100-fold larger requirements in training compute.

Based on these observations they split the history of compute in ML into three eras: the Pre Deep Learning Era, the Deep Learning Era and the Large-Scale Era . Overall, the work highlights the fast-growing compute requirements for training advanced ML systems.

They have detailed investigation into the compute demand of milestone ML models over time. They make the following contributions:

1. They curate a dataset of 123 milestone Machine Learning systems, annotated with the compute it took to train them.

Hydrogen-powered business jet edges closer to certification

Hydrogen-powered business jet edges closer to certification