Breaking News

Woman flies to Seattle to show how all the businesses have left their downtown...

Woman flies to Seattle to show how all the businesses have left their downtown...

James Freeman ILLEGAL ARREST DROPPED & HUGE LAWSUIT

James Freeman ILLEGAL ARREST DROPPED & HUGE LAWSUIT

Jamie Kennedy blasts LA mayoral election swing: 'Literal crime scene'

Jamie Kennedy blasts LA mayoral election swing: 'Literal crime scene'

Here we go, the Los Angeles Times is admitting that yes, tens of thousands of mail in ballots...

Here we go, the Los Angeles Times is admitting that yes, tens of thousands of mail in ballots...

Top Tech News

World's longest-range airliner takes to the skies

World's longest-range airliner takes to the skies

Batteries That Use Sodium Instead of Lithium Could Be Low-Cost Rival to Tesla's

Batteries That Use Sodium Instead of Lithium Could Be Low-Cost Rival to Tesla's

Elon and SpaceX Have Made AI Training 10 Times Faster

Elon and SpaceX Have Made AI Training 10 Times Faster

Oklo COO Says Nuclear Waste Could Power America For 150 Years

Oklo COO Says Nuclear Waste Could Power America For 150 Years

SpaceX Announces LARGEST Starship Mission Ever! They've never done this before!

SpaceX Announces LARGEST Starship Mission Ever! They've never done this before!

Cars Are Fast Becoming Dystopian Prison Pods...

Cars Are Fast Becoming Dystopian Prison Pods...

Our Emergency Water Plan Wasn't Good Enough - So We Built This

Our Emergency Water Plan Wasn't Good Enough - So We Built This

Sodium Ion Batteries Can Reach 100 Gigawatt Per Hour Per Year Scale in 2027

Sodium Ion Batteries Can Reach 100 Gigawatt Per Hour Per Year Scale in 2027

Juiced Bikes proves capable electric motorcycles don't have to cost a lot

Juiced Bikes proves capable electric motorcycles don't have to cost a lot

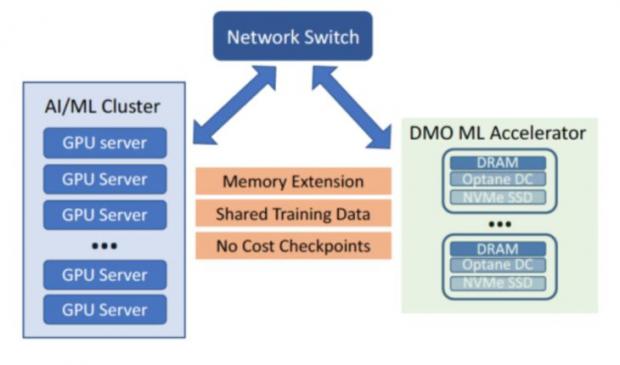

Beyond Big Data is Big Memory Computing for 100X Speed

This new category is sparking a revolution in data center architecture where all applications will run in memory. Until now, in-memory computing has been restricted to a select range of workloads due to the limited capacity and volatility of DRAM and the lack of software for high availability. Big Memory Computing is the combination of DRAM, persistent memory and Memory Machine software technologies, where the memory is abundant, persistent and highly available.

Transparent Memory Service

Scale-out to Big Memory configurations.

100x more than current memory.

No application changes.

Big Memory Machine Learning and AI

* The model and feature libaries today are often placed between DRAM and SSD due to insufficient DRAM capacity, causing slower performance

* MemVerge Memory Machine bring together the capacity of DRAM and PMEM of the cluster together, allowing the model and feature libraries to be all in memory.

* Transaction per second (TPS) can be increased 4X, while the latency of inference can be improved 100X

Headlight projectors turn your car into a drive-in theater

Headlight projectors turn your car into a drive-in theater