Breaking News

What's the Point of Rocketing to the Moon and Mars?

Apple FORCES iPhone Users To Prove Age With ID Or Lose Unrestricted Internet Access

Apple FORCES iPhone Users To Prove Age With ID Or Lose Unrestricted Internet Access

Iran War Silver Lining: NATO's Death Knell?

Iran War Silver Lining: NATO's Death Knell?

US Retail Sales Jumped Most In 8 Months In February

US Retail Sales Jumped Most In 8 Months In February

Top Tech News

DARPA O-Circuit program wants drones that can smell danger...

DARPA O-Circuit program wants drones that can smell danger...

Practical Smell-O-Vision could soon be coming to a VR headset near you

Practical Smell-O-Vision could soon be coming to a VR headset near you

ICYMI - RAI introduces its new prototype "Roadrunner," a 33 lb bipedal wheeled robot.

ICYMI - RAI introduces its new prototype "Roadrunner," a 33 lb bipedal wheeled robot.

Pulsar Fusion Ignites Plasma in Nuclear Rocket Test

Pulsar Fusion Ignites Plasma in Nuclear Rocket Test

Details of the NASA Moonbase Plans Include a Fifteen Ton Lunar Rover

Details of the NASA Moonbase Plans Include a Fifteen Ton Lunar Rover

THIS is the Biggest Thing Since CGI

THIS is the Biggest Thing Since CGI

BACK TO THE MOON: Crewed Lunar Mission Artemis II Confirmed for Wednesday...

BACK TO THE MOON: Crewed Lunar Mission Artemis II Confirmed for Wednesday...

The Secret Spy Tech Inside Every Credit Card

The Secret Spy Tech Inside Every Credit Card

Red light therapy boosts retinal health in early macular degeneration

Red light therapy boosts retinal health in early macular degeneration

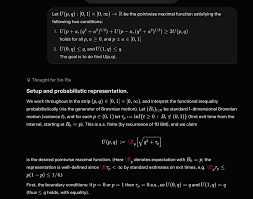

XAI Grok 4.20 and OpenAI GPT 5.2 Are Solving Significant Previously Unsolved Math Proofs

Demonstrates AI generating novel math objects (Bellman functions for optimal control) quickly, linking to isoperimetric profiles and Takagi function (RH-related). It advances understanding of square function instabilities without changing the world immediately, but highlights AI's role in "small steps" toward deeper insights in stochastic processes and analysis.

There have also been solving of decades old open significant math problems solved by OpenAI GPT 5.2.

Paul Erd?s, the prolific Hungarian mathematician, posed over 1,000 open problems across fields like combinatorics, number theory, and graph theory. Many remain unsolved, with some offering cash prizes. Recently, large language models (LLMs) like OpenAI's GPT-5.2 (released in late 2025) have made headlines for contributing to solutions, often autonomously or with minimal human guidance. These are tracked on the erdosproblems.com site, maintained by mathematician Thomas Bloom, and verified by experts like Terence Tao (Fields Medalist).

Overall, since Christmas 2025, 15 Erd?s problems have been marked solved with 11 crediting AI involvement. Tao's analysis is that there are 8 cases of meaningful autonomous AI progress, 6 where AI built on prior research.

AI doesn't just generate initial proofs — it excels at iteratively refining them. For instance, in the process of turning raw AI-generated arguments into full research papers, tools like GPT can

– automatically rewrite sections for better clarity.

– Adjust phrasing, variable choices, or logical flow.

– Incorporate historical context, literature references, and natural-language explanations.

– Produce multiple drafts quickly, reducing the "feel of a generic AI-produced document" to something approaching acceptable research-paper quality.

In one specific example Tao referenced (around the autonomous solve of Erd?s #728), the collaboration led to a new writeup of the proof that he judged as within ballpark of an acceptable standard for a research paper, with room for improvement but far beyond initial raw output.

Tao describes this as shifting proof-writing toward a search problem at scale. AI can generate thousands of mini-lemmas, variations, or exposition styles, then use checkers (like Lean) to validate and cull the weak ones, while humans focus on high-level direction. He calls this "vibe-coding" or rapid iteration — complementary to human strengths. There is useful vibe-math proofing. Enabling more possibilities to be considered more rapidly. A good human mathematician can have math research enhanced and sped up.

Hydrogen-powered business jet edges closer to certification

Hydrogen-powered business jet edges closer to certification